Thanks for the tip! I took a look and it seems like Recognize uses this: https://github.com/jordipons/musicnn

Last update was 4 years ago but will give it a try this weekend.

Thanks for the tip! I took a look and it seems like Recognize uses this: https://github.com/jordipons/musicnn

Last update was 4 years ago but will give it a try this weekend.

I’m thinking of Ripping my CD collection again. I’m researching a way to use a LLM to tidy up the metadata.

If you ever figure out how to use AI to determine the genre(s) of a song, let me know. Have been looking for something like that for quite a while.

The RFC you linked recommends that no new X- prefixed headers should be used.

The paragraph you quoted does not say you should use the X- prefix, only comments on how it was used.

See section 3 for the creation of new parameters: https://datatracker.ietf.org/doc/html/rfc6648#section-3

I still work on software that extendively uses X- headers.

I wouldn’t worry too much about it. The reason they give is mostly that it is annoying if a X- header suddenly becomes standardized and you end up having to support X-Something and Something. Most likely a non-issue with real custom headers.

We don’t have many unit tests that test against live APIs, most use mock APIs for testing.

The only use for this header would be if somebody sees it during development, at which point it would already be in the documentation or if you explicitly add a feature to look if the header is present. Which I don’t see happening any time soon since we get mailed about deprecations as well.

I don’t really get the purpose of a header like this, who is supposed to check it? It’s not like developers casually check the headers returned by an API every week.

Write them a mail if you see deprecated functions being used by a certain API key, probably much more likely to reach somebody that way.

Also, TIL that the IETF deprecated the X- prefix more than 10 years ago. Seems like that one didn’t pan out.

Yes, because Docker becomes significantly more powerful once every container has a different publicly addressable IP.

Altough IPv6 support in Docker is still lacking in some areas right now, so add that to the long list of IPv6 migration todos.

There is this notion that IPv6 exposes any host directly to the internet, which is not correct. When the client IP is attacked “directly” the attacker still talks to the router responsible for your network first and foremost.

While a misconfiguration on the router is possible, the same is possible on IPv4. In fact, it’s even a “feature” in many consumer routers called “DMZ host”, which exposes all ports to a single host. Which is obviously a security nightmare in both IPv4 and IPv6.

Just as CGNAT is a thing on IPv4, you can have as many firewalls behind one another as you want. Just because the target IP always is the same does not mean it suddenly is less secure than if the IP gets “NATted” 4 times between routers. It actually makes errors more likely because diagnosing and configuring is much harder in that environment.

Unless you’re aggressively rotating through your v6 address space, you’ve now given advertisers and data brokers a pretty accurate unique identifier of you. A much more prevalent “attack” vector.

That is what the privacy extension was created for, with it enabled it rotates IP addresses pretty regularily, there are much better ways to keep track of users than their IP addresses. Many implementations of the privacy extension still have lots of issues with times that are too long or with it not even enabled by default.

Hopefully that will get better when IPv6 becomes the default after the heat death of the universe.

With NAT on IPv4 I set up port forwarding at my router. Where would I set up the IPv6 equivalent?

The same thing, except for the router translating 123.123.123.123 to 192.168.0.250 it will directly route abcd:abcd::beef to abcd:abcd::beef.

Assuming you have multiple hosts in your IPv6 network you can simply add “port forwardings” for each of them. Which is another advantage for IPv6, you can port forward the same port multiple times for each of your hosts.

I guess assumptions I have at the moment are that my router is a designated appliance for networking concerns and doing all the config there makes sense, and secondly any client device to be possibly misconfigured. Or worse, it was properly configured by me but then the OS vendor pushed an update and now it’s misconfigured again.

That still holds true, the router/firewall has absolute control over what goes in and out of the network on which ports and for which hosts. I would never expose a client directly to the internet, doesn’t matter if IPv4 or IPv6. Even servers are not directly exposed, they still go through firewalls.

Anything connected to an untrusted network should have a firewall, doesn’t matter if it’s IPv4 or IPv6.

There’s functionally no difference between NAT on IPv4 or directly allowing ports on IPv6, they both are deny by default and require explicit forwarding. Subnetting is also still a thing on IPv6.

If anything, IPv6 is more secure because it’s impossible to do a full network scan. My ISP assigned 4,722,366,482,869,645,213,696 addresses just to me. Good luck finding the used ones.

With IPv4 if you spin up a new service on a common port it usually gets detected within 24h nowadays.

Off the top of my head, why did you set the prefix to 0x1? I was under the impression that it only needs to be set if there are multiple vlans

I have multiple VLANs, 0x1 is my LAN and 0x10 is my DMZ for example. I then get IP addresses abcd:abcd:a01::abcd in my LAN and abcd:abcd:a10::bcdf in my DMZ.

However, I get a /56 from my ISP wich gets subnetted into /64. I heard it’s not ideal to subnet a /64 but you might want to double check what you really got.

what are your rules for the WAN side of the firewall?

Only IPv4 + IPv6 ICMP, the normal NAT rules for IPv4 and the same rules for IPv6 but as regular rule instead of NAT rule.

My LAN interface is only getting an LLA so maybe it’s being blocked from communicating with the ISP router.

If you enable DHCPv6 in your network your firewall should be the one to hand out IP addresses, your ISP assigns your OPNsense the prefix and your OPNsense then subnets them into smaller chunks for your internal networks.

It is possible to do it without DHCPv6 but I didn’t read into it yet since DHCPv6 does exactly what I want it to do.

I’m no expert on IPv6 but here’s how I did it on my OPNsense box:

WAN interface (probably already done)LAN interface, use Track interface on IPv6, track the WAN interface and choose a prefix ID like 0x1::eeee to ::ffff, you don’t have to type the full IP)Advertisments to Managed and Priority to HighAfter that your DHCP server should serve public IPv6 addresses inside of your prefix and clients should be able to connect to the internet.

A few notes:

This seems like common sense, no?

Hindsight is 20/20. As seen in the post, there’s not that many APIs that don’t just blindly redirect HTTP to HTTPS since it’s sort of the default web server behaviour nowadays.

Probably a non-issue in most cases since the URLs are usually set by developers but of course mistakes happen and it absolutely makes sense to not redirect HTTP for APIs and even invalidate any token used over HTTP.

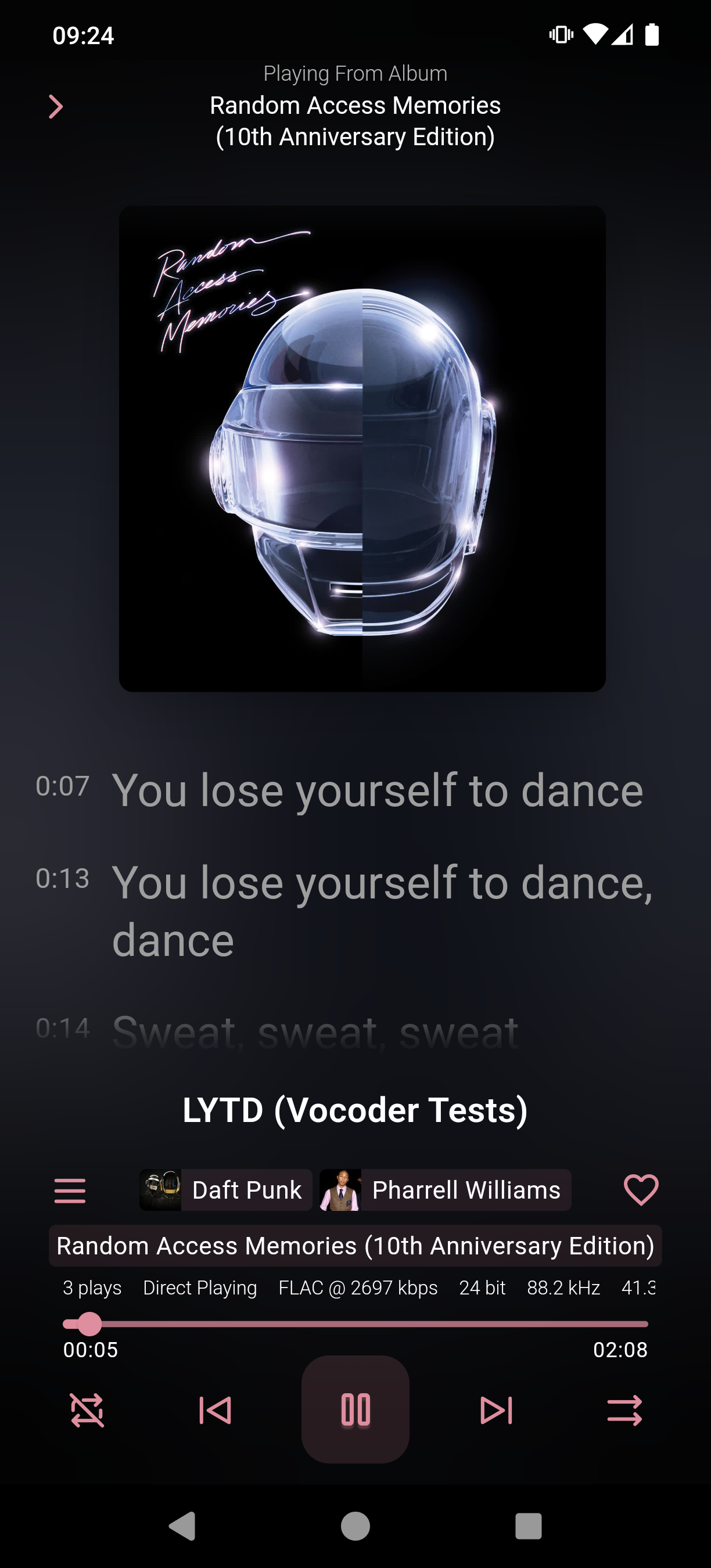

Do a library rescan on your music library and then download the latest Finamp beta from here: https://github.com/jmshrv/finamp/releases

Lyrics should work then:

OP didn’t ask for unpopular languages but for languages you want to be more popular.

I also want C# to be more popular, it’s a fantastic language.

Check out Picard, I switched to it when I switched to Linux: https://flathub.org/apps/org.musicbrainz.Picard

I don’t run Pi-hole but quickly peeking into the container (docker run -it --rm --entrypoint /bin/sh pihole/pihole:latest) the folder and files belong to root with the permissions being 755 for the folder and 644 for the files.

chmod 700 most likely killed Pi-hole because a service that is not running as root will be accessing those config files and you removed their read access.

Also, I’m with the guys above. Never chmod 777 anything, period. In 99.9% of cases there’s a better way.

Flathub is actually fairly strict with its submissions, probably too much work for most fake submissions to follow the PR guidelines.

https://docs.flathub.org/docs/for-app-authors/requirements

https://github.com/flathub/flathub/pulls?page=2&q=is%3Apr+is%3Aclosed

They have a different architecture so it comes down to preference.

Docker runs a daemon that you talk to to deploy your services. podman does not have a daemon, you either directly use the podman command to deploy services or use systemd to integrate them into your system.

HTTP/3 is UDP as well but only on port 443.

I have exactly the setup you described, a Raspberry Pi with an 8 TB SSD parked at a friend of mine. It connects to my network via Wireguard automatically and just sits there until one of my hosts running Duplicati starts to sync the encrypted backups to it.

Has been running for 2 years now with no issues.